When Jon Haidt Called Me a Cheater

Science, Snark, and the Moral Mind

In April 2015, Chelsea Schein and I wrote a blog post titled “Making Sense of Moral Disagreement: Liberals, Conservatives and the Harm-Based Template they Share,” about how liberals and conservatives have the same moral mind. Then, in October of the same year, Jonathan Haidt, Jesse Graham, and Peter Ditto published a post on the same blog titled “Volkswagen of Moral Psychology.”

Chelsea Schein and I had been publishing papers arguing that all moral judgment revolves around perceptions of harm — not five separate “moral foundations,” as Haidt’s hugely influential theory claimed. Their response compared us to a corporation caught rigging its diesel engines to cheat on emissions tests. They wrote that our results came from “a testing garage rigged in [our] favor” and concluded: “It may be time for a recall.”

The actual substance of the post contained no allegation of fabricated data and no charge of misconduct. The complaints were about analytical choices — how we split our samples, how we defined our variables. Ordinary scientific disagreement, framed as a scandal.

To be clear: Chelsea and I never cooked any books. I bring up this claim because it illustrates something important about how science actually works — and about a debate that matters for how we understand moral conflict.

In this post, I want to talk about how science progresses, what it’s like to challenge a dominant paradigm, and why the model we use to understand the moral mind matters for all of us. The reason I wrote this piece today — more than ten years after that blog post — is because we just had an important paper come out, one that I think really helps to further shift the paradigm in moral psychology.

Science Is a Contact Sport

Science is social. It’s pushed forward by people advocating for theories, who get attached to those theories, who feel threatened when the theories are challenged. But it’s mostly pushed forward by the much larger group of researchers who don’t have a dog in any particular fight — people who just want frameworks that seem compelling and, more importantly, useful for their own work. What helps them do research? What gives them tools they can actually use?

This social dimension is something established scientists tend to understand, but it’s often overlooked by people outside academia and underappreciated by students. Thomas Kuhn appreciated it. In The Structure of Scientific Revolutions, Kuhn argued that science doesn’t progress by the steady accumulation of facts. It lurches forward through paradigm shifts — periods where a dominant framework shapes what questions get asked, what counts as evidence, and what tools everyone uses, until anomalies pile up and a new framework replaces the old one. Replacement isn’t just about who has better data. It’s about social dynamics, institutional momentum, and what instruments people can pick up and run with.

The paradigm fight featured in the Volkswagen blog post is between two theories of the moral mind.

Moral Foundations Theory argues that the mind contains several distinct moral mechanisms, or “foundations.” Liberals rely mostly on care and fairness. Conservatives draw on those plus loyalty, authority, and purity. Different moral minds, different moral languages.

The alternative — the one my lab has been building for over a decade — argues that everyone shares the same moral mind, organized around a single thing: the perception that someone is being victimized. We call this the Theory of Dyadic Morality, because the template at the heart of moral judgment is dyadic — an intentional agent causing damage to a vulnerable victim.

We originally called it “harm-based morality,” but we’ve recently shifted to talking about “victimhood.” Partly that’s genuine theoretical growth — victimhood captures the full richness of what people perceive better than “harm” does. But partly it’s strategic. MFT had defined “harm” so narrowly — basically physical violence — that when we said “it’s all about harm,” people pictured punching. We meant something much broader: the intuitive perception that a vulnerable entity is being damaged.

Paradigms evolve in response to criticism and social forces. Ours did too.

These competing theories matter because the world is in moral conflict. You feel it at Thanksgiving, you see it on social media, you hear it in congressional hearings. Liberals and conservatives don’t just disagree about policy — they seem to inhabit different moral universes. How we model the moral mind shapes how we understand that conflict, and whether we think it’s bridgeable.

The paper my collaborators and I just published — twelve studies, multiple methods, years of work — is, I think, the most important piece of evidence yet for why the dominant model is wrong. But to understand why the paper matters, you need to understand where the debate started.

Is Sex with Your Sibling Harmless?

The key question for decades in moral psychology is, is sex with your sibling harmless?

Not a joke. In the year 2000, Haidt ran a now-famous study where people read carefully designed scenarios, including one about consensual incest between adult siblings. No pregnancy risk, no lasting damage, no one finds out. The point was to show that people condemn these acts even though they can’t articulate a reason. Haidt called this “moral dumbfounding” and argued it was evidence that morality goes beyond harm — that we have separate mechanisms (“foundations”) for purity, loyalty, and so on.

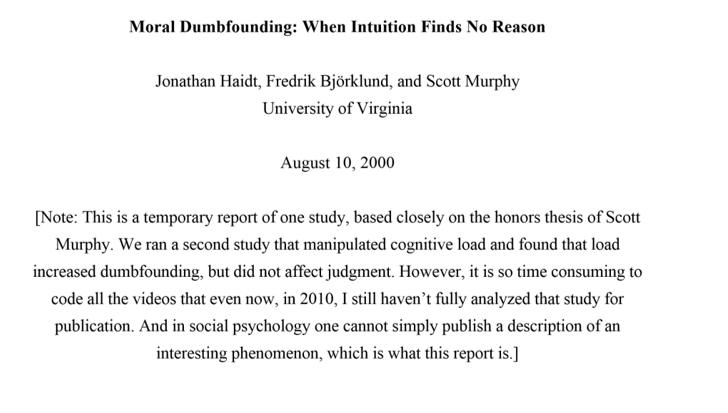

It’s important to note that this study has never been published in a peer-reviewed journal. You can find it online as a “temporary report” dated August 2000. In a note on the first page, Haidt writes that he ran a second study but “it is so time consuming to code all the videos that even now, in 2010, I still haven’t fully analyzed that study for publication.” It’s 2026. The foundational demonstration for the most cited theory in moral psychology never went through peer review because rigorously testing the hypotheses took too much time.

When I started in moral psychology, I wasn’t trying to challenge anyone. I was curious about those scenarios. Haidt’s interpretation was that people were dumbfounded — they condemned the acts but couldn’t find any harm. But that assumed the acts really were seen as harmless.

I wondered instead, what if people saw harm intuitively, the way people find flying on an airplane intuitively dangerous even when they know the statistics? Maybe the scenarios weren’t harmless at all — not to the people reading them. Despite the assurances that sleeping with your sibling was a-okay, maybe people were right to feel—deep down—that maybe it wasn’t a great idea.

Chelsea Schein, Adrian Ward and I measured whether people actually perceived harm in those scenarios, and they sure did. They thought incest damaged the sibling relationship, that taboo acts created suffering for the people involved, that violations harmed someone’s soul or their community. The only people who actually thought these scenarios were truly victimless were the liberal researchers who designed them.

Perceived Harm Predicts Everything

Study after study over the following years showed the same thing: the perception that someone is being victimized predicted moral condemnation across every type of violation — not just physically harmful violations but “purity” violations, “loyalty” violations, all of them. More perceived victimization, more condemnation. This is true for liberals and conservatives alike.

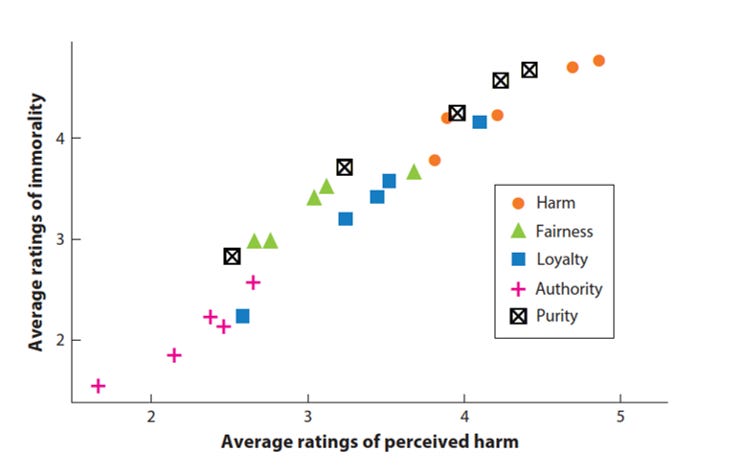

Nico Restrepo Ochoa published a particularly clean demonstration of this effect in Poetics in 2022. He measured harm perception and immorality across a wide range of moral acts — acts MFT would assign to different foundations. When you plot them, they all fall on the same line, regardless of whether the act is supposedly “about” harm, fairness, loyalty, authority, or purity.

The Theory of Dyadic Morality says this is because the moral mind works by template matching — the same cognitive process behind all categorization. When people ask “is this immoral?”, they compare the act to a template, and that template involves an intentional agent causing damage to a vulnerable victim. The closer the match, the stronger the condemnation. A CEO who harms a baby matches the template well (obvious agent obviously harming an obviously vulnerable victim). A baby who harms a CEO matches it poorly. Both involve “harm,” but they produce very different moral reactions because the dyadic structure — who’s the agent, who’s the victim, how vulnerable, how intentional — is doing the real cognitive work.

We’ve published dozens of studies on our victim-based moral minds across many journals, but it hasn’t been easy. Pushing against the dominant paradigm in moral psychology has been exactly what you’d expect from reading Kuhn. The defenders of MFT have been defensive, because that’s what happens when you challenge a paradigm — people have built careers on it, designed their studies around it, and organized their thinking with it. I get it.

After my very first journal submission criticizing MFT, I got a very long peer review back from Jon, who suggested I was wasting everyone’s time. He also attached a copy of his own dissertation. The Volkswagen post was part of the same pattern. These are the social forces Kuhn described, and they’re not unique to moral psychology — every field has them.

You Can’t Beat a Paradigm Without a Scale

Kuhn understood that, to move the field forward, it’s not enough to just show problems with the old paradigm. You also have to explain the data it was explaining. And pragmatically, you need to give people an instrument.

MFT became popular partly because of the Moral Foundations Questionnaire — a scale you could drop into any study. It doesn’t matter that the MFQ has conservatism baked in—purity is measured by rating the importance of sexual chastity, and authority is measured by rating the importance of obeying a preacher. And it doesn’t matter that the structure of MFT keeps changing from three foundations to four to five to six to, as its own architects have written, potentially “74, or perhaps 122, or 27.” It doesn’t matter that Jeremy Frimer’s papers showed the link between political orientation and foundations is weak and content-dependent — conservatives care about “purity” when it means sexual chastity, but liberals light up when purity means environmental contamination, and the same is true for loyalty (to the military vs. to labor unions).

Clearly, the foundations aren’t deep cognitive differences so much as artifacts of which specific questions ended up on the survey, but a popular survey existed, and people used it. If you have 5 morality things in your survey, at least one of them is bound to correlate with something—and significant correlations are the pathway to publication. That mattered more than any theoretical critique.

So to nudge the field, I needed to both account for the data MFT was capturing — the obvious fact that liberals and conservatives disagree about morality — and I needed a scale of my own.

Who’s Vulnerable? Ask and Find Out

Assumptions of vulnerability (AoVs) is the construct has been missing from our work, and from the debate. AoVs are how much people assume that someone (or something) is especially vulnerable to victimization.

Everyone cares about protecting vulnerable entities from harm, but people disagree sharply about who is especially vulnerable. This insight is essential because it connects a shared harm-based mind, with individual differences in moral judgments.

Abortion is a case in point: if you believe a fetus can feel pain, you’ll have very different moral views than someone who sees it as an insensate clump of cells. With immigration, progressives tend to frame undocumented immigrants as vulnerable victims seeking safety, while conservatives see them as threats to innocent citizens. Climate debates pit the suffering of ecosystems against the suffering of workers who lose jobs to environmental regulations. Debates about transgender athletes pit harm to competitors against harm to the transgender person.

In each of these cases, the disagreement isn’t about whether harm matters. It’s about who’s getting harmed.

We built an AoV scale to measure these assumptions of vulnerability. For any target, participants answer three questions on a scale from 1 (not at all vulnerable) to 5 (completely vulnerable):

“I believe that the following are especially vulnerable to being harmed.”

“I think that the following are especially vulnerable to mistreatment.”

“I feel that the following are especially vulnerable to victimization.”

Three items per target. You can measure AoVs for anything — fetuses, immigrants, corporations, LLMs, the rainforest, God. Researchers can adapt it to whatever question they’re studying, which is the whole point: it’s a tool that’s easy to use.

AoVs are not themselves moral judgments. They’re beliefs about is, not ought. “Cats can feel pain” might feed into a moral view about how we treat cats, but it’s not itself a moral view. This is important because it breaks the tautology at the heart of MFT. MFT explains moral differences with moral differences (you condemn promiscuity because you have a purity foundation, and we know you have a purity foundation because you condemn promiscuity). AoVs explain moral differences with something outside morality.

Twelve Studies, One Question

The paper that just came out — “Liberals and Conservatives See Different Victims,” in PSPB, led by Jake Womick and Emily Kubin, ran twelve studies using this measure.

(The full author team on the paper: Jake Womick, Emily Kubin, Daniela Goya-Tocchetto, Nico Restrepo Ochoa, Carlos Rebollar, Kyra Kapsaskis, Sam Pratt, Helen Devine, Keith Payne, Stephen Vaisey, and me.)

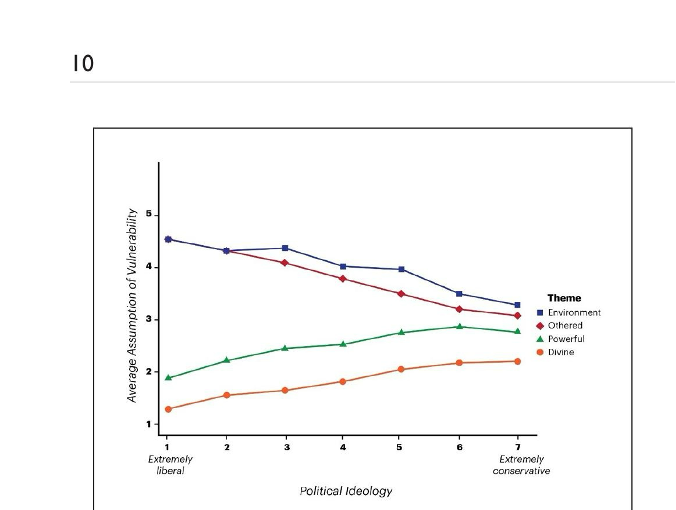

Many of today’s most divisive debates connect to four clusters of targets: the Environment (rainforests, ecosystems), the Othered (immigrants, transgender people, Black Americans), the Powerful (CEOs, police officers), and the Divine (God, the Bible). (Here is a mock-up of the AoV scale we used to assess these four clusters.)

In our studies, we found that liberals see the Environment and the Othered as more vulnerable than conservatives. Conservatives see more vulnerability in the Powerful and the Divine than do liberals.

There are political similarities: Liberals and conservatives agree on the rank order of vulnerability — both sides see the Environment and the Othered as more vulnerable than the Powerful and the Divine. The disagreement is about how big the gaps are between these groups. Liberals amplify differences in AoVs: they see a steep hierarchy, focusing on group differences. Some groups are deeply vulnerable (oppressed), while others are basically invulnerable (oppressors). Conservatives dampen AoV differences between groups: they see vulnerability as more evenly distributed, with everyone similarly at risk (everyone is an individual).

The shape of this graph should look familiar if you’ve ever seen the classic MFT graph — big gap for liberals, smaller gap for conservatives. Both frameworks are describing the same underlying moral divide. But AoVs do it without positing separate moral mechanisms, without a survey that has conservatism baked in, and without explaining morality by pointing to morality.

In direct comparisons with the MFQ, AoVs predicted moral judgments on hot-button issues — transgender rights, environmental policy, tax breaks, religious freedom — as well as or better than moral foundations (and again, without measuring morality itself). AoVs also predicted real charitable behavior and showed up in implicit cognition, not just self-report. (Separable moral foundations never show up in implicit cognition test, despite being argued to be “intuitions.”)

AoVs are also causal, not just correlational. In one study, participants read about a female CEO who refused to give money to a homeless person at night. Some were asked to think about ways the CEO could be vulnerable to harm. Others thought about the homeless person’s vulnerability. The manipulation shifted moral judgments: emphasize the homeless person’s vulnerability and the CEO’s behavior looks worse; emphasize the CEO’s vulnerability and it looks less bad.

All these studies make the idea of harm-based morality useful to other scientists and especially usual to people trying to understand our political divide. And useful ideas are those that are adopted. (An idea being true also makes it useful.)

A Shifting Paradigm: A Slide in Chicago

A few weeks ago, my student Danica Dillion was at SPSP 2026 in Chicago. She sent me a photo from a talk by Killian McLoughlin, from Molly Crockett’s lab at Princeton. The slide cited dyadic morality as a unifying template — as something that seems obviously true — rather than as a “but see also this theory” footnote. That felt new.

One slide doesn’t mean the paradigm has shifted. But I’ve been going to SPSP for a long time, and I can feel something changing. The question used to be “is harm really central?” Now it’s “okay, harm is central — what do we do with that idea?” The MFQ keeps showing poor cross-cultural validity. Moral foundations fail to predict people’s everyday moral concerns and about hot-button issues. The number of foundations keeps changing. Meanwhile, evidence for a common victim-based template keeps accumulating from multiple labs.

Most people, even most scientists, have a sanitized picture of how scientific progress works. The popular version is that researchers compare evidence and the better idea wins. In practice, paradigms shape what counts as a legitimate question, what methods are acceptable, whose findings get taken seriously. Challenging a paradigm means arguing against the priors of the people who review your papers and fund your grants. When evidence goes against the dominant view, the first reaction is dismissal (this idea is absurd) and the second reaction is resistance (this idea is wrong). That’s just how it works. Understanding that is important for understanding why any field — moral psychology included — takes so long to correct course.

What’s at Stake

Questions about our moral minds matter beyond academia. If MFT is right — if liberals and conservatives literally have different moral minds — then bridging divides requires something like translation between alien languages: reframe your message in the other side’s moral vocabulary. And that approach has had mixed results.

If our account is right, the challenge is different. To understand a conservative who opposes gay marriage, you don’t need to learn a foreign moral language. You need to understand that they genuinely perceive harm — to souls, to marriage, to children — even if you think those harms are imaginary. To understand a liberal who wants to defund the police, you need to understand that they see police as agents of harm against vulnerable communities, not as vulnerable public servants.

Everyone cares about victims. The disagreement is about who counts as one. Once you see it that way, you can have a conversation — share evidence, share experiences, make vulnerability visible — instead of talking past each other in different moral languages.

This paper was years of work by a big team, and connects together over a decade of research by many brilliant people. When I started this work, I wasn’t looking for a fight with anyone, including Jon Haidt (who was gracious enough to speak in my very first symposium at SPSP). My first foray into harm perception was just a questionnaire asking whether people might see some “harmless wrongs” as harmless. They didn’t. That initial finding pulled me into a decade of arguing — in journals, at conferences, and occasionally on blog posts with inflammatory titles.

But something I’ve been thinking about lately is that maybe the fight isn’t really between me and Jon Haidt. Maybe it’s between the ideas themselves. There’s this concept from memetics that ideas are a kind of organism, competing for survival in the ecology of human minds. We think we’re the ones doing the fighting, but maybe the ideas are fighting through us. We’re the hosts, and the theories of moral psychology are the true agents.

I find that thought weirdly comforting, because it means you can separate the people from the paradigms. Jesse Graham — the second author on the Volkswagen post — is a friend and one of the funniest people I know. Pete Ditto is an amazing person and mentor, and the only social psychologist I’ve ever gone surfing with (we saw dolphins). Jon Haidt will likely never be friends, but I genuinely respect his writing. He’s probably one of the best storytellers in the social sciences.

The ideas we champion are in conflict. We are not.

And the idea that people can separate themselves from their positions is, really, is the whole point of the research we do in the lab (and write about here). You can disagree about who’s a victim and still sit down at the same table. Liberals and conservatives share the same moral mind — the same deep concern for protecting the vulnerable. They just disagree about who the vulnerable are. That’s a hard disagreement, but it’s a deeply human one, and one you can actually talk about.

I study and teach about people overcoming differences, and you can live what you teach. At Thanksgiving dinner, and even in moral psychology.

Very fascinating piece. I will continue to follow. I’m curious about this practice you identify called “template matching”. What in your view determines the kinds of template someone will use in any given situation? I have read, going back to Gordon Allport’s book on the nature of prejudice, that prejudiced people have a harder time reading other people. So I would predict that such a person would develop a template that sees a lot of harm in violating promises and oaths, whereas someone who is better at reading others would be more forgiving of broken promises and oaths because they would still feel like they understood a person and could understand why they did it. This would suggest there is some underlying moral structure that explains why some templates get formed and others do not. I would imagine that some template formation is also cultural.

Fascinating. I wonder how this aligns with the hypothesis of 'morality as cooperation', or perhaps the research linking political orientations to psychological perceptions of boundaries and hierarchies? I will say though, I do think it generally true that people are poor at articulating their moral intuitions as Haidt observed- and that universities tend to make people not divulge their true moral intuitions because they can't necessarily rationalize them in the 'acceptable' ways of doing so. The ideas you present actually slightly align with my thinking regarding hierarchical thinking and morality; while not nearly as well-written or scientific as this article, see it here https://moralstructure.substack.com/p/the-evolutionary-psychology-of-social?r=hnzyk&utm_campaign=post&utm_medium=web